You’ve written the content, you’ve created the image, you’ve targeted the right audience and now your marketing campaign is finally live. It’s tempting at this stage to sit back and relax, safe in the knowledge that your messages are being sent and doing exactly what they’re meant to do. After all, you put all that work in, there’s no way they could be improved upon, surely?

A crucial but often-overlooked part of the marketing process is split testing, also known as A/B testing. As the name suggests, split testing compares the results from two or more versions of content; proof that there’s always more work to be done to ensure your marketing campaigns are achieving their goals.

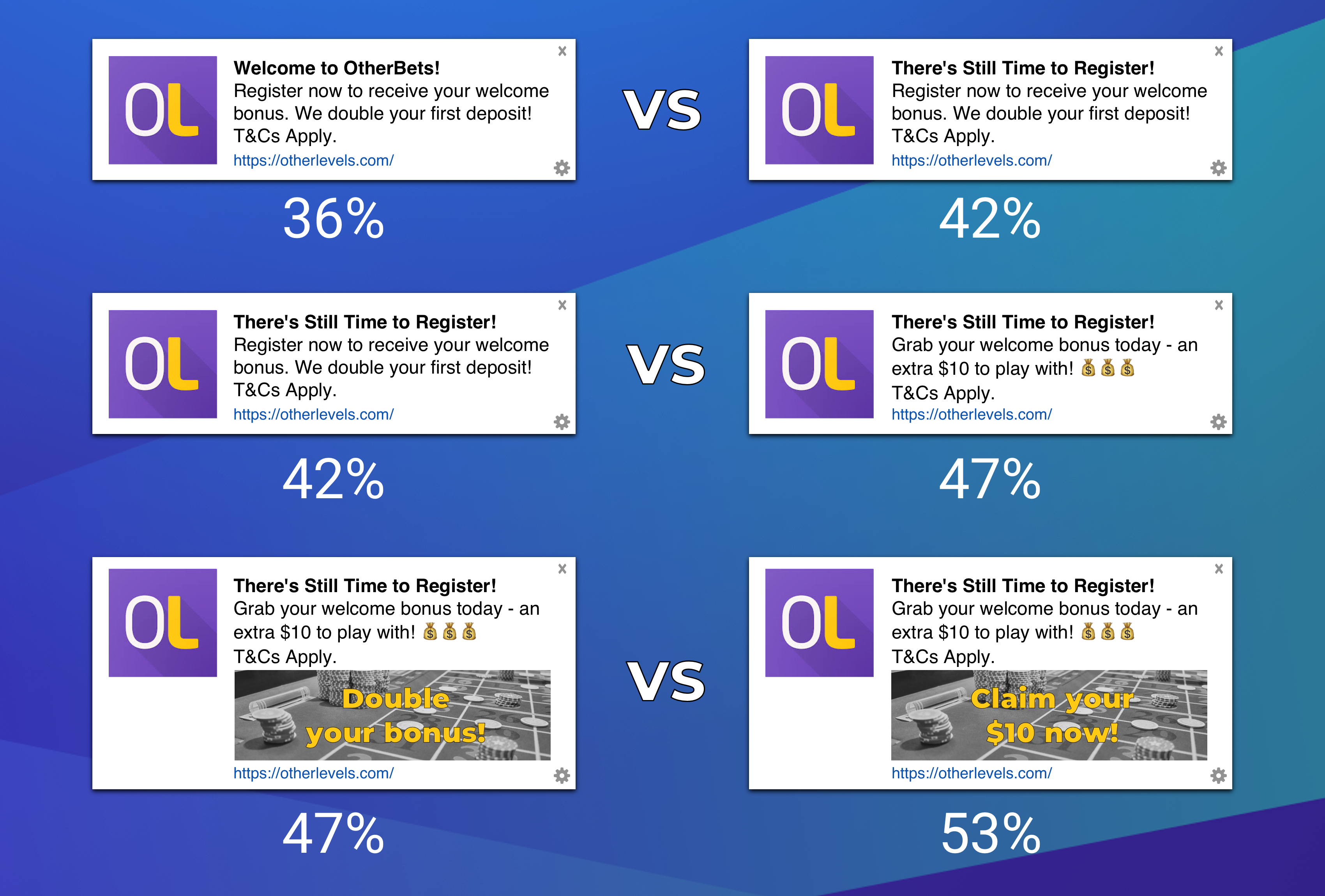

At OtherLevels we’ve always championed the importance of split testing as a key part of the marketing cycle to boost engagement results. Our method is simple: test slight variations of your message, one at a time, over a few weeks, and you’ll find that your engagement rates can increase by as much as 125%.

What does split testing require?

For a simple push message, you may wish to test versions of the text, title, and image content. With OtherLevels you have total flexibility; we give you the ability to compare as many versions as you like. For instance, you may choose to split test with 3 messages at once, with the third option constantly rotating to allow you to regularly change content and experiment with new ideas, while keeping the main two variants in play to switch back to at any time you choose.

For simplicity you may want to compare messages with only one variant changed, or if you need to rapidly adjust the campaign you may choose to change multiple things at once, creating as many variations as you need. It’s truly as flexible as you need. As results come in or new ideas are born, these split messages can be created and deleted dynamically, allowing you to improve results over time without having to restart an entire campaign.

Each message split can be sent to as many or as few users as you want, with the ability to decide by percentage how many recipients each message will have. Say you are testing two versions of two different variables, giving you four variations to test at once, you can easily send each of these to just 20% of your audience while maintaining a 20% control group to make results clearer to compare.

What does it achieve?

The results speak for themselves. A recent client conversion campaign, with the goal of encouraging anonymous users to register, was able to double engagement rates by just tweaking the text content. By adding specific values to the welcome offer shown and clarifying what users needed to do in order to access this offer, click-through rates increased from an average of 2.1% to 4.2%. That’s a 100% uplift, doubling the number of users clicking through on messages just by tweaking the offer phrasing. Obviously over a longer time frame these results would have an astronomical difference, so it’s key to find the right piece of content early in the marketing cycle to keep results as high as possible.

For image split testing the results can be even more drastic, with one client boosting engagement from 0.12% to 0.81% – a quintuple increase! Extrapolated out, you can imagine what a huge difference this would make for your campaign.